when the first process exits workflow processing the queue is checked and if not empty the next import will start the import process.I have been running this application on my laptop for around 6 months without any issues. The other simultaneous processes are queued for later import. To avoid parallel imports the engine will allow only one import workflow on the same repository, data source or input map to proceed at the same time. The files are processed in order of the arrival date (last modification timestamp) on the file system.Īn data import through FileWatcher can lead to many simultaneous uploads if many data files have been added. The next instance can then acquire the lock and process newly arrived files. Only after the instance has finished processing the set of files it found while initiating processing, it releases the file lock. The instance which successfully acquired the lock accesses all the files in the directory and moves them into the done or rejected directory depending on the processing outcome. The instance ID used in the lock file is given by the JVM argument NODE_ID, which must be different for each member of the cluster. This file lock contains information about the instance which acquired the lock, so that an abandoned lock can be cleaned up by the correct instance. It creates a file with the file name specified in the LockFile property of the respective DataSet configuration. When one or more files are added for upload, one of the application cluster instances acquires a lock in the root directory of the DataSet. history) and determines whether the file has been loaded for this DataSet before.

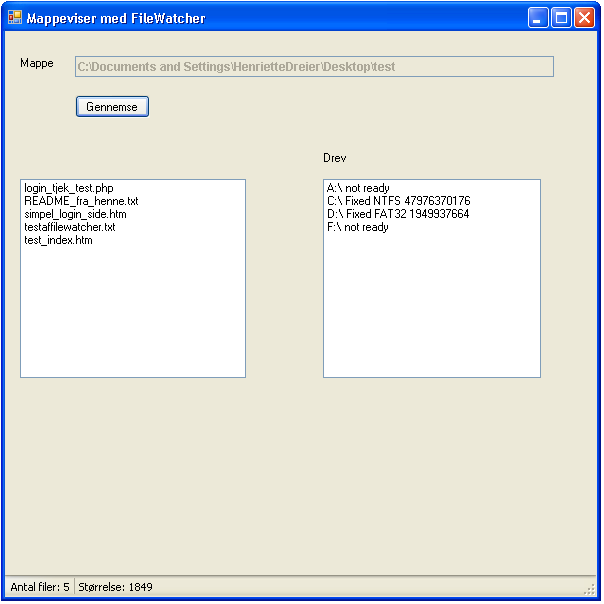

If the same file is being attempted to be processed, FileWatcher accesses the previously processed file names (which are in a file named. For example, date and time could be a part of the file name. For regular uploads it is advisable to devise a file naming convention which ensures uniqueness of a file name. The application assumes that the same file name indicates the same data and prohibits this scenario. One example for a processing failure is when you try to upload a file with the same file name as a previously loaded file. If the processing failed, the file is moved into the 'rejected' directory to facilitate reprocessing after the error condition has been resolved. When a file is successfully processed it is moved into the 'done' directory. While a newly uploaded file is being processed, the file ending is changed to the ending defined in the InProgressSuffix, to indicate that the file should not be processed by any other program.

When Import Workflow is initiated, a draft is created for each imported record.

Users can initiate imports either manually through the TIBCO MDM user interface (UI), or via FileWatcher in an automated lights-out manner. These files contain typically master data, which can uploaded into a data source and then imported into the repository. New data is imported through the user interface (UI) you upload a data source, and then import it into the repository.įileWatcher expedites importing data by polling for new files, and uploading them automatically. Data is imported into the catalog by defining data sources and mapping the data sources into a repository.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed